I am Researcher in Human-Computer Interaction,

Design, and Engineering.

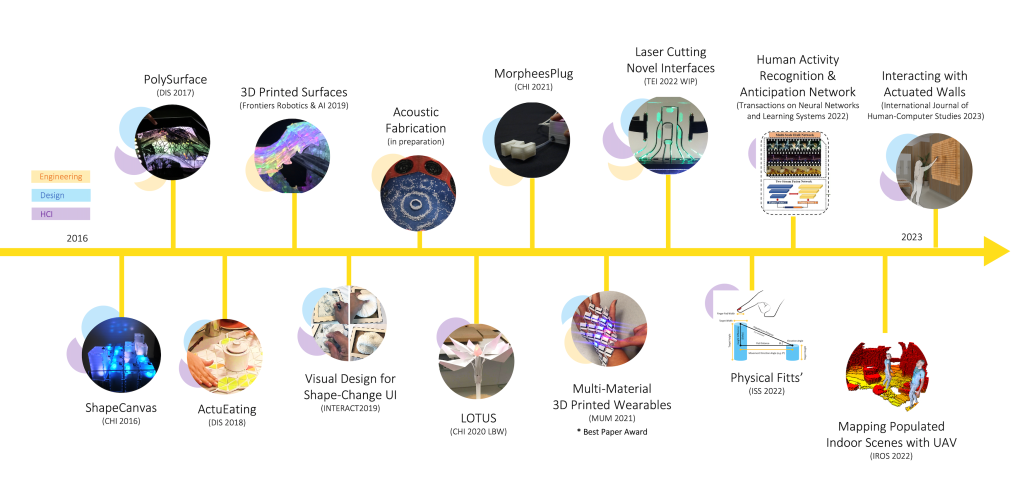

With nearly 10 years of experience conducting both quantitative and qualitative research, my work focuses on the development of new digital fabrication methods for novel technologies such as shape-changing displays and wearables. My research has been published at venues across in Human-Computer Interaction, Interactive Systems Design, Robotics, & Computer Vision.

Research Interests & Expertizes

Human-Computer Interaction

Human-Computer Interaction

– Shape-Changing Displays

– Wearable Technologies

– Tangibles and Data Physicalization

My expertise in Human-Computer Interaction (HCI) includes the design and development of shape-changing displays, data physicalization, and wearable technologies. By conducting a range of quantitative and qualitative user studies I was able to better understand how people use and interact with these emerging tangible technologies.

Design Research

Design Research

– Computer-Aided Design (CAD)

– Collaborative Design

– Research-Through-Design

– Iterative Prototyping

I have a strong background in Design research, specializing not just in aesthetics and functionality of developing interactive hardware systems and interfaces but also in developing theoretical design principles. Especially to establish new design spaces for the next generation of fabrication methods (e.g. functional fabrication) and technologies.

Engineering Research

Engineering Research

– Mechanical and Soft Robotics

– Material Science (e.g. meta-materials and auxetics)

– Acoustics (e.g particle levitation and manipulation)

My expertise in hardware and mechanical engineering for actuation has been translated to robotics and my work is now moving towards soft robotic actuation solutions. My research also looks into material science and computational geometry (via MATLAB) for the design of auxetics structures that can be utilized for shape-morphing interfaces.

Fabrication Research

Fabrication Research

– Stereolithography (SLA)

– Multi-material fused filament fabrication (FDM).

– Laser Cutting Techniques

I specialize in various additive manufacturing methods including SLA and multi-material FDM 3D printing. I have published numerous research papers on digital fabrication approaches over the last 5 years that have been adopted by other researchers. Currently, I am working on developing new forms of fabrication methods using acoustic technologies such as ultrasound.

Selected Projects

Multi-Material 3D Printing for Prototyping Deformable Wearables

Multi-Material 3D Printing for Prototyping Deformable Wearables

Aluna Everitt, Alexander Keith Eady, and Audrey Girouard. 2021. Enabling Multi-Material 3D Printing for Designing and Rapid Prototyping of Deformable and Interactive Wearables. In 20th International Conference on Mobile and Ubiquitous Multimedia (MUM 2021), December 5-8, 2021, Leuven, Belgium. ACM, New York, NY, USA, 11 pages. https://doi.org/10.1145/3490632.3490635. * Best Paper Award *

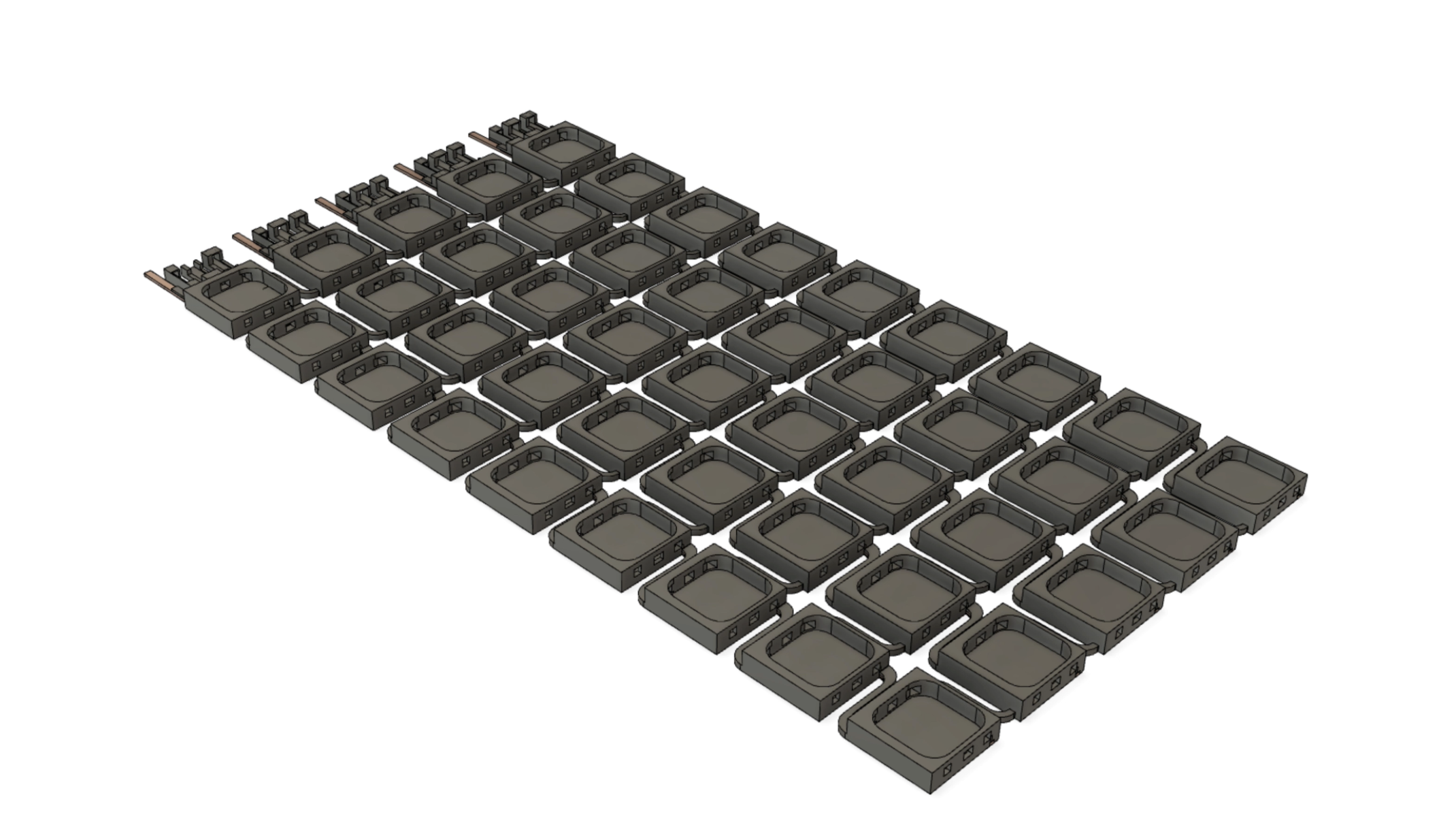

Deformable surfaces with interactive capabilities provide opportunities for new mobile interfaces such as wearables. Yet current fabrication and prototyping techniques for deformable surfaces, that are both flexible and stretchable, are still limited by complex structural design and mechanical surface rigidity.We propose a simplified rapid fabrication technique that utilizes multi-material 3D printing for developing customizable and stretchable surfaces for mobile wearables with interactive capabilities embedded during the 3D printing process. Our prototype, FlexiWear, is a dynamic surface with embedded electronic components that can adapt to mobile body shape/movement and applied to contexts such as healthcare and sports wearables. We describe our design and fabrication approach using a commercial desktop 3D printer, the interaction techniques supported, and possible application scenarios for wearables and deformable mobile interfaces. Our approach aims to support rapid development and exploration of deformable surfaces that can adapt to body shape/movement.

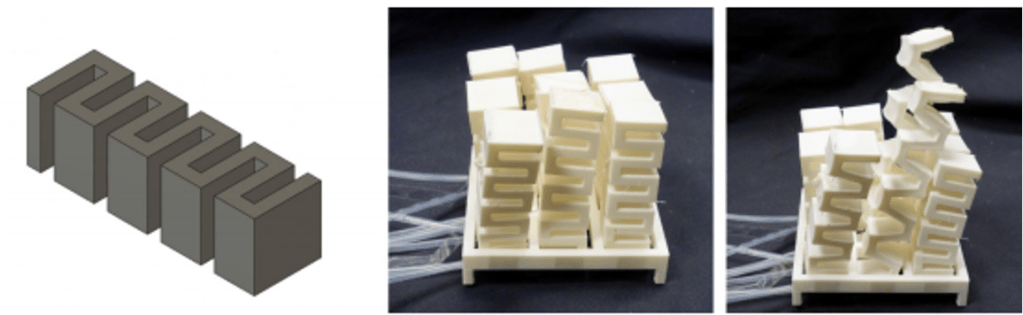

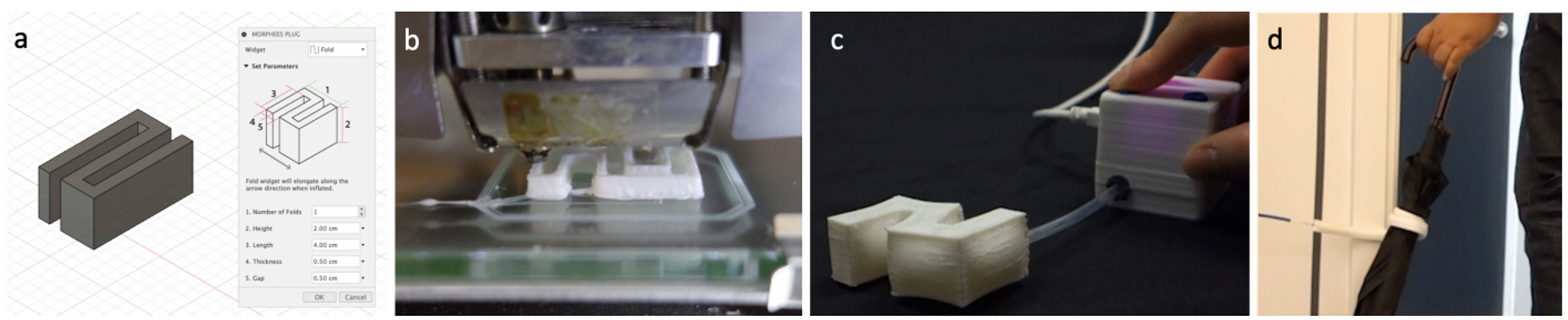

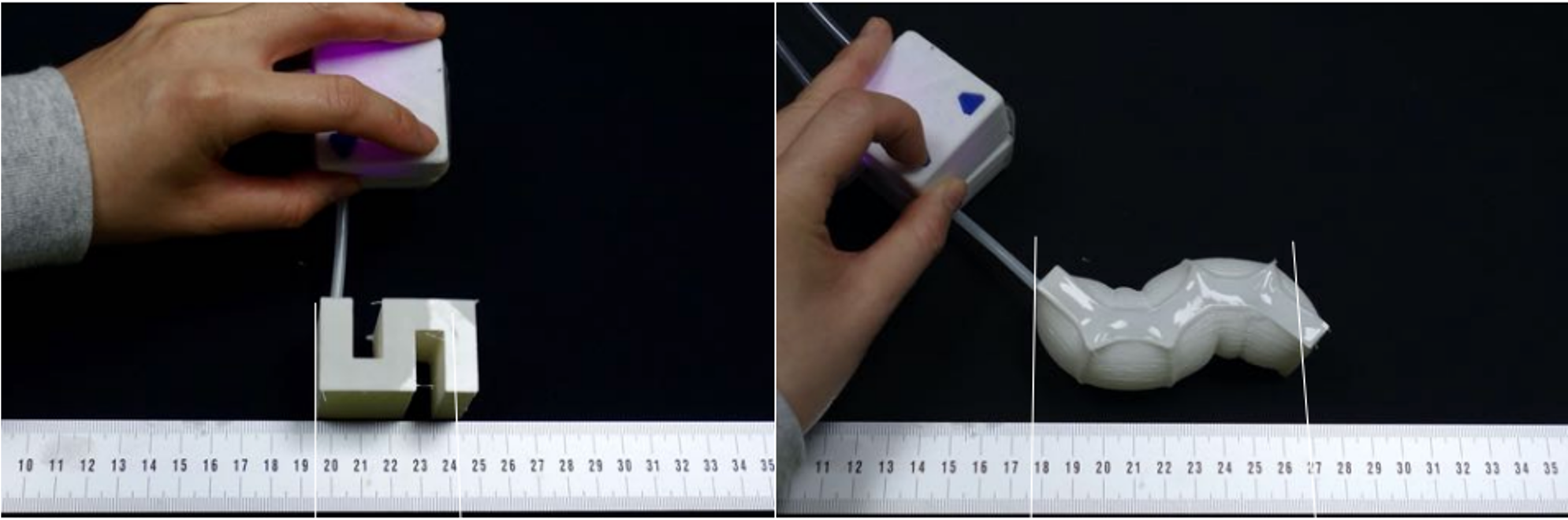

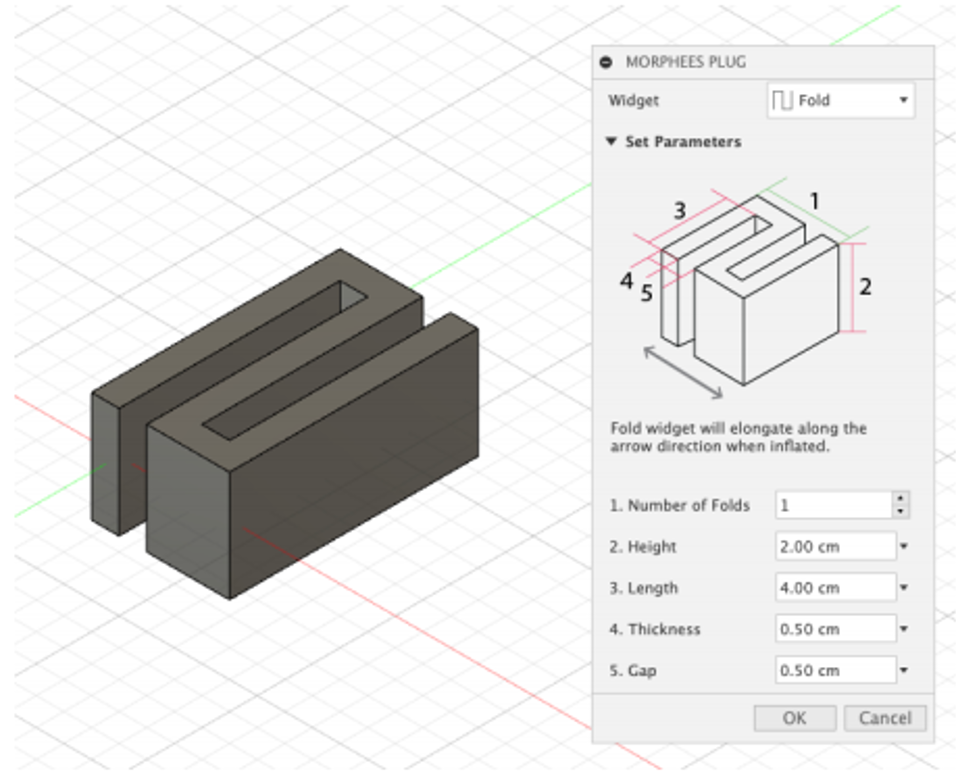

MorpheesPlug: A Toolkit for Prototyping Shape-Changing UIs

MorpheesPlug: A Toolkit for Prototyping Shape-Changing UIs

3D Printing Deformable Surfaces for Shape-Changing Displays

3D Printing Deformable Surfaces for Shape-Changing Displays

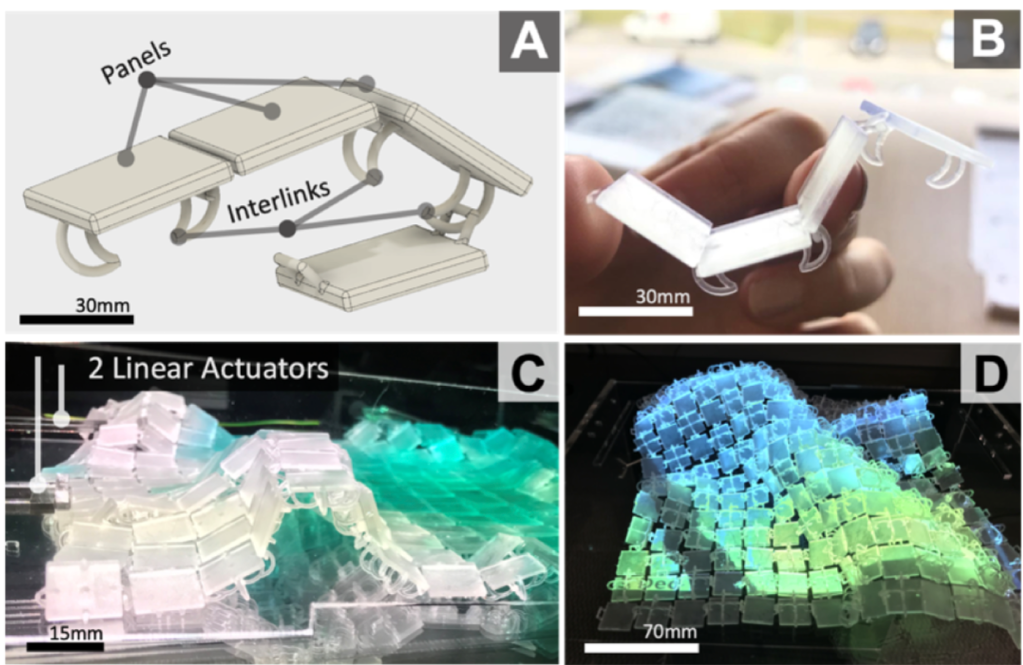

Aluna Everitt and Jason Alexander. “3D Printed Deformable Surfaces for Shape-Changing Displays.” Frontiers in Robotics and AI 6 (2019): 80. Front. Robot. AI, 28 August 2019 | https://doi.org/10.3389/frobt.2019.00080

We use interlinked 3D printed panels to fabricate deformable surfaces that are specifically designed for shape-changing displays. Our exploration of 3D printed deformable surfaces, as a fabrication technique for shape-changing displays, shows new and diverse forms of shape output, visualizations, and interaction capabilities. This article describes our general design and fabrication approach, the impact of varying surface design parameters, and a demonstration of possible application examples. We conclude by discussing current limitations and future directions for this work.

Laser Cutting Deformable Surfaces for Shape-Changing Displays

Laser Cutting Deformable Surfaces for Shape-Changing Displays

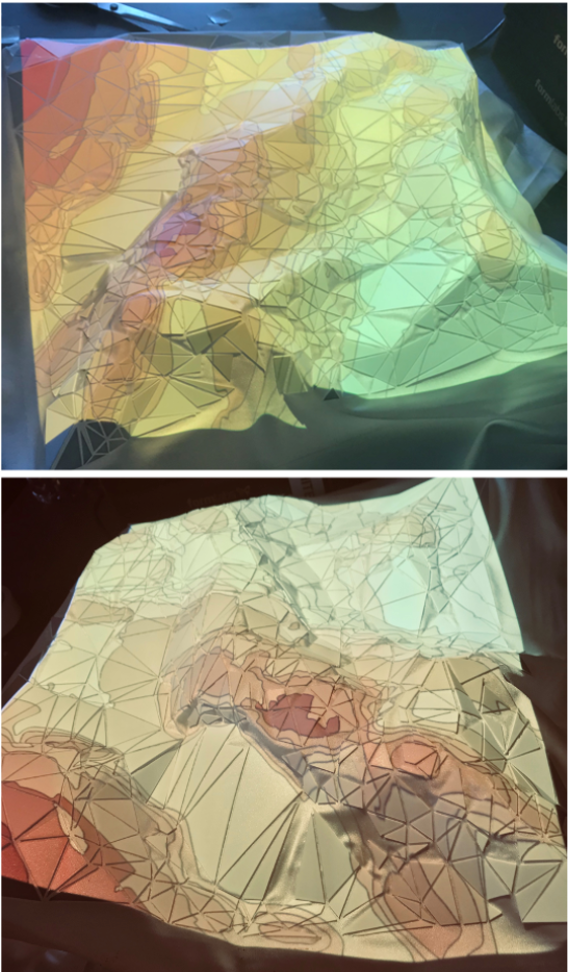

Aluna Everitt and Jason Alexander. 2017. PolySurface: A Design Approach for Rapid Prototyping of Shape-Changing Displays Using Semi-Solid Surfaces. In Proceedings of the 2017 Conference on Designing Interactive Systems (DIS ’17). Association for Computing Machinery, New York, NY, USA, 1283–1294. DOI:https://doi.org/10.1145/3064663.3064677

We present a design approach for rapid fabrication of high fidelity interactive shape-changing displays using bespoke semi-solid surfaces. This is achieved by segmenting virtual representations of the given data and mapping it to a dynamic physical polygonal surface. First, we establish the design and fabrication approach for generating semi-solid reconfigurable surfaces. Secondly, we demonstrate the generalizability of this approach by presenting design sessions using datasets provided by experts from a diverse range of domains. Thirdly, we evaluate user engagement with the prototype hardware systems that are built. We learned that all participants, all of whom had no previous interaction with shape-changing displays, were able to successfully design interactive hardware systems that physically represent data specific to their work. Finally, we reflect on the content generated to understand if our approach is effective at representing intended output based on a set of user-defined functionality requirements.

Exploring Shape-Changing Content Generation by the Public

Exploring Shape-Changing Content Generation by the Public

Aluna Everitt, Faisal Taher, and Jason Alexander. 2016. ShapeCanvas: An Exploration of Shape-Changing Content Generation by Members of the Public. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems (CHI ’16). Association for Computing Machinery, New York, NY, USA, 2778–2782. DOI:https://doi.org/10.1145/2858036.2858316

Shape-changing displays – visual output surfaces with physically-reconfigurable geometry – provide new challenges for content generation. Content design must incorporate visual elements, physical surface shape, react to user input, and adapt these parameters over time. The addition of the ‘shape channel’ significantly increases the complexity of content design but provides a powerful platform for novel physical design, animations, and physicalizations. In this work we use ShapeCanvas, a 4×4 grid of large actuated pixels, combined with simple interactions, to explore novice user behavior and interactions for shape-change content design. We deployed ShapeCanvas in a café for two and a half days and observed users generate 21 physical animations. These were categorized into seven categories and eight were directly derived from people’s personal interests. This paper describes these experiences, the generated animations and provides initial insights into shape-changing content design.